Posted 30 August 2022

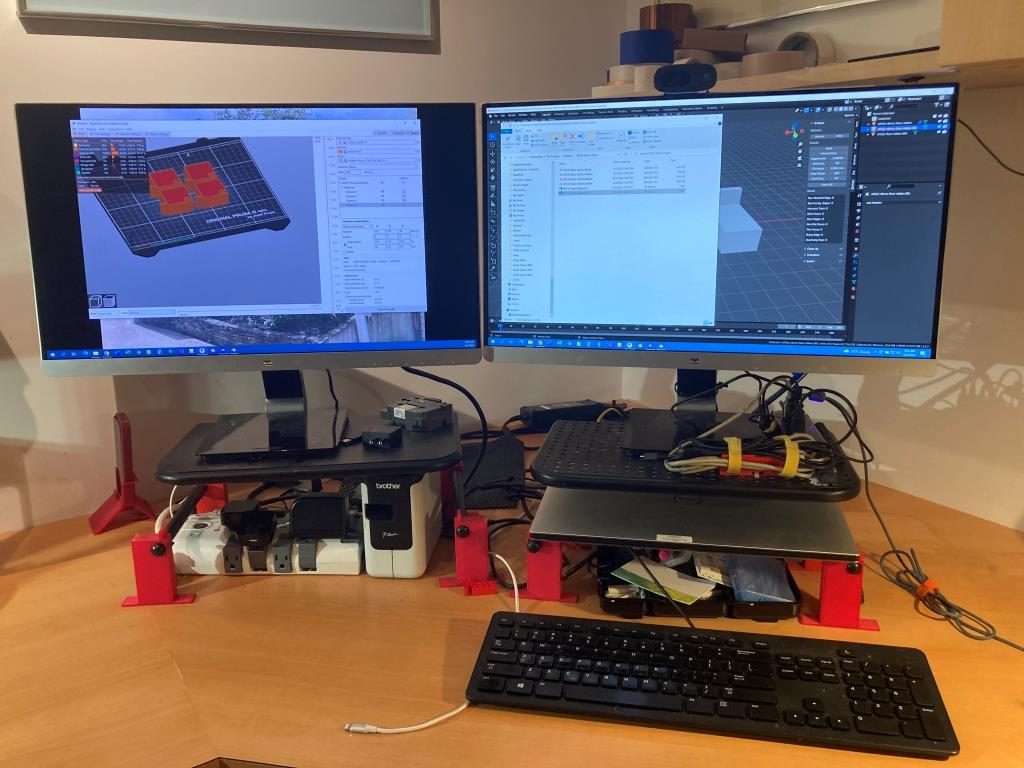

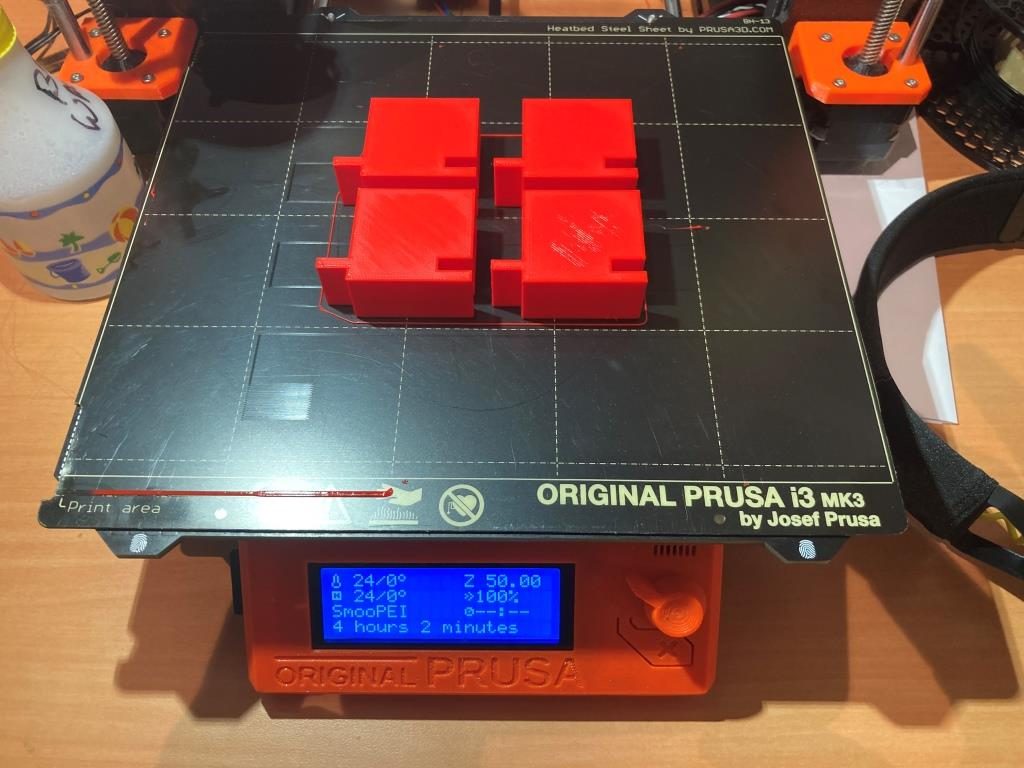

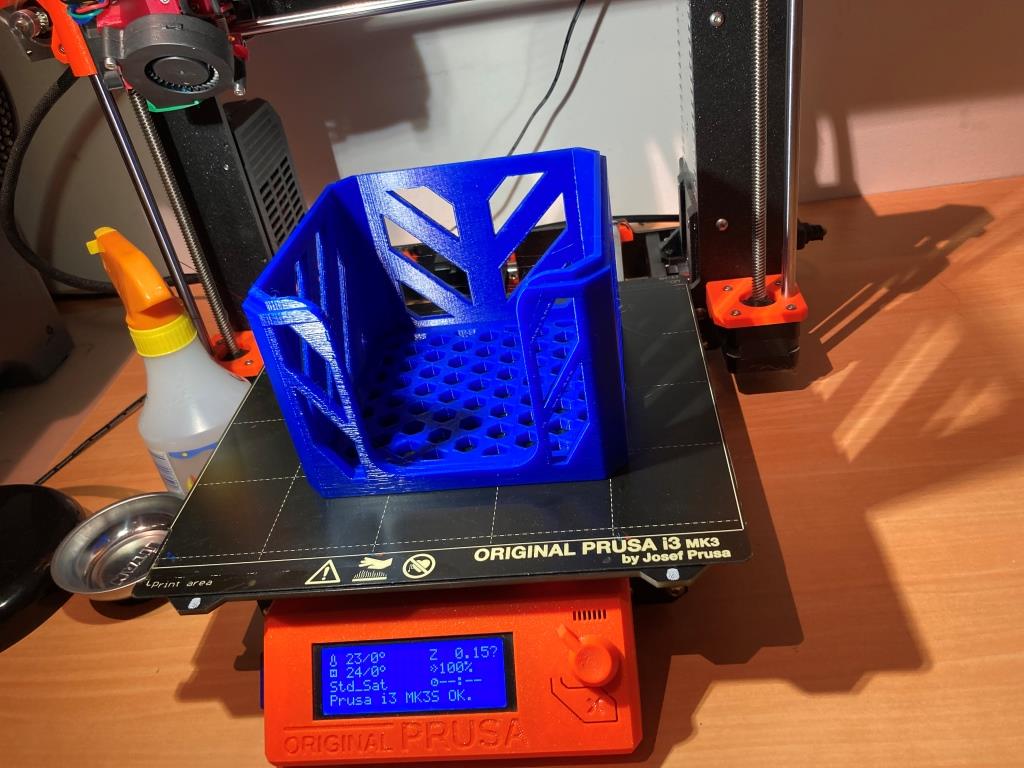

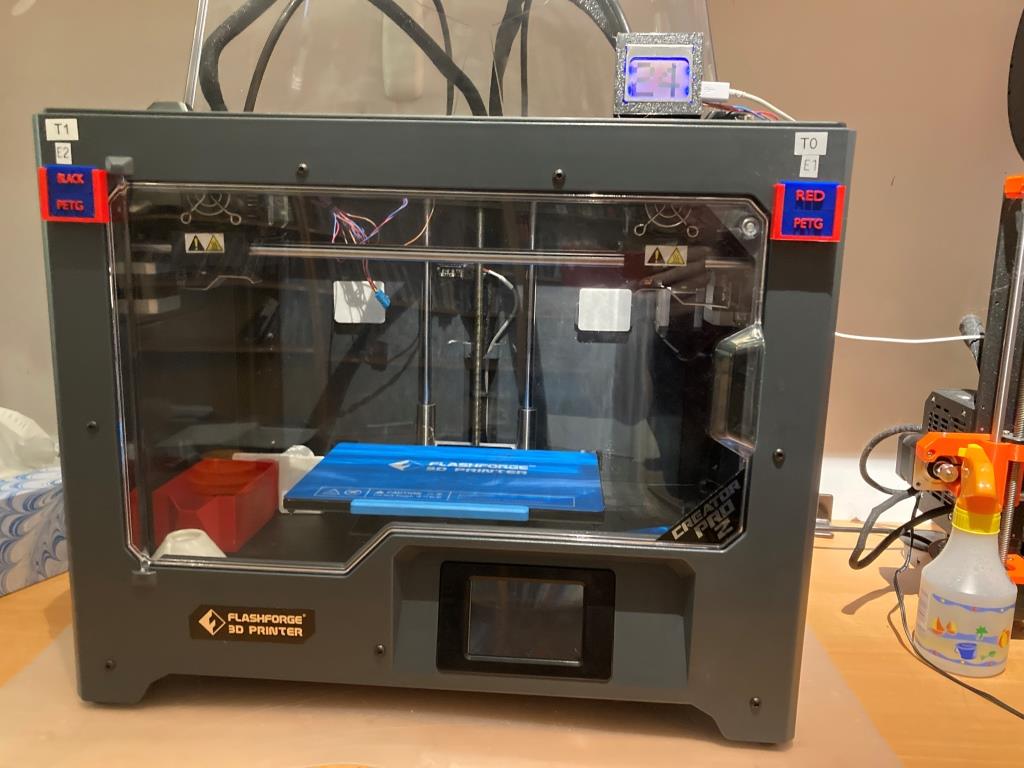

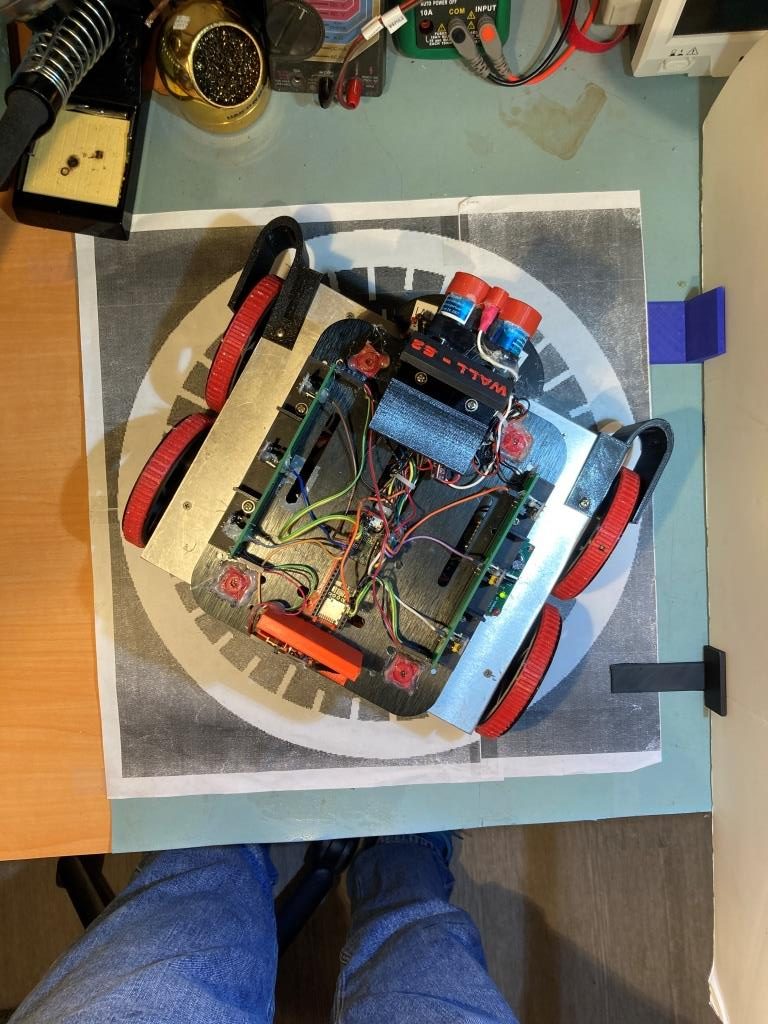

Exactly one year ago I posted about receiving my then-new FlashForge Creator PRO 2 IDEX 3D printer. Since then I have made many successful prints and have been very happy with the machine. Then just a few weeks ago I started having serious problems with prints not sticking to my flexible build plate. Such problems occur regularly with 3D printers, and they are usually fairly easy to troubleshoot and fix, but this time I found that the touch-screen GUI on the FFCP2 IDEX machine to be more of a hindrance than a help in working my way through the problem. This post describes the issues I encountered and some suggested changes to the GUI to resolve them. The firmware used is the latest version, V1.8.

Extruder Z-axis Offset Calibration:

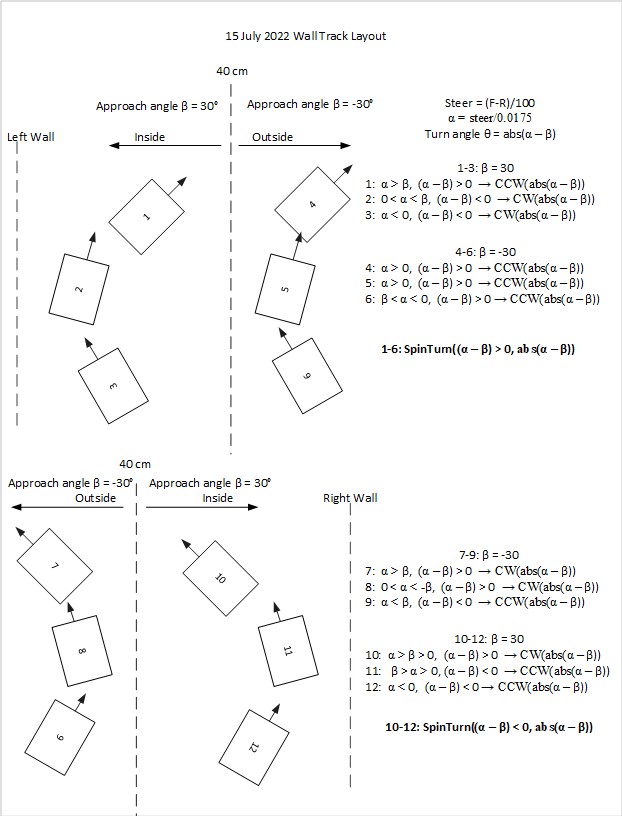

One of the most common problems for non-sticking prints is the extruder Z-axis offset. If the offset is too large, the filament won’t contact the print bed with enough area to adhere the first layer, and this can cause the BOD (ball of death) as the filament balls up around the extruder. When I do this procedure on my Prusa MK3S+ single-extruder printer, the display shows the current offset so adjustments can be made from a known starting position. Unfortunately the FFCP2 GUI for some reason forces the user to calibrate both extruders every time, and starts by setting the offset on both extruders to +2mm, undoubtedly to make sure the nozzles are well away from the print bed. This also erases the existing offset value, so there is no way to slightly ‘tweak’ the offset value one way or the other – the offsets have to be set from the beginning every time. For first-time users, this might be OK, but for experienced printers it is a royal PITA.

Imagine you have done some minor maintenance on the right extruder, but the left extruder is printing perfectly. Now you need to recalibrate the right extruder, but don’t want to mess with your finely-tuned left extruder. Nope – can’t do that. As soon as you select ‘Z axis Calibration’ you are doomed! The offset values for both extruders fly out the window and you are back to using the supplied plastic spacer to calibrate both extruders ‘by feel’. Doesn’t matter that you had that left extruder all dialed in – you are hosed!

Or, maybe you are happily running PETG on both extruders, but now you want to make a print that requires a dissolvable filament, like AquaSys120 or similar. You change out the filament on one extruder and make a test print using just the dissolvable filament and find it either isn’t extruding at all (offset too close to the bed) or more likely isn’t adhering to the bed due to subtle differences in the texture/stickiness of AquaSys120 filament vs PETG. You know the way to fix this is to slowly vary the z-offset of the AquaSys120 extruder, but you don’t want to mess with the nicely printing PETG extruder. Sorry – go directly to jail, do not pass GO, and do not collect $200.

The only way to handle either of these scenarios is to have already recorded the old Z offset values for both extruders (and who does that?) so you can go through the you must calibrate both every time procedure, dial both extruder offsets to where you had them before, and then adjust slightly from there. Make sure you write the new values down, because if you want to make the next tweak or you make a filament change a month from now, you have to start all over again – ARGGHHHH!

As I mentioned above, this might be OK for first-time users, but becomes a royal PITA for more experienced users. The FFCP2 does have an ‘Advanced’ menu, but this menu only handles X and Y axis tweaks – not the Z axis. This menu should be revised to allow the Z-offset for either extruder to be set independently, and should offer options to start from the default (+2mm) location or from the current z-axis offset position.

Z-axis Calibration Delays Due to Extruder Cooling Requirement:

When troubleshooting a printing problem, I might go through several Z-axis calibration procedures. However, each time I start the procedure, the printer forces me to wait for the extruders to cool to room temperature, which takes several minutes – ARGHHH! This is stupid for two reasons; first, I would think I’d want to calibrate the Z-axis offset at the normal operational temperature to take any temperature-related mechanical changes into consideration, not at room temp. Second, if FlashForge decided that any such mechanical changes were inconsequential, then it shouldn’t matter at what temperature the procedure is conducted, and so there shouldn’t be any delay at all. This seems to be just one of those things where the programmer decided the process should always start with room temperature extruders and never gave any thought to the tradeoff between programming ease and customer frustration.

Print File Names:

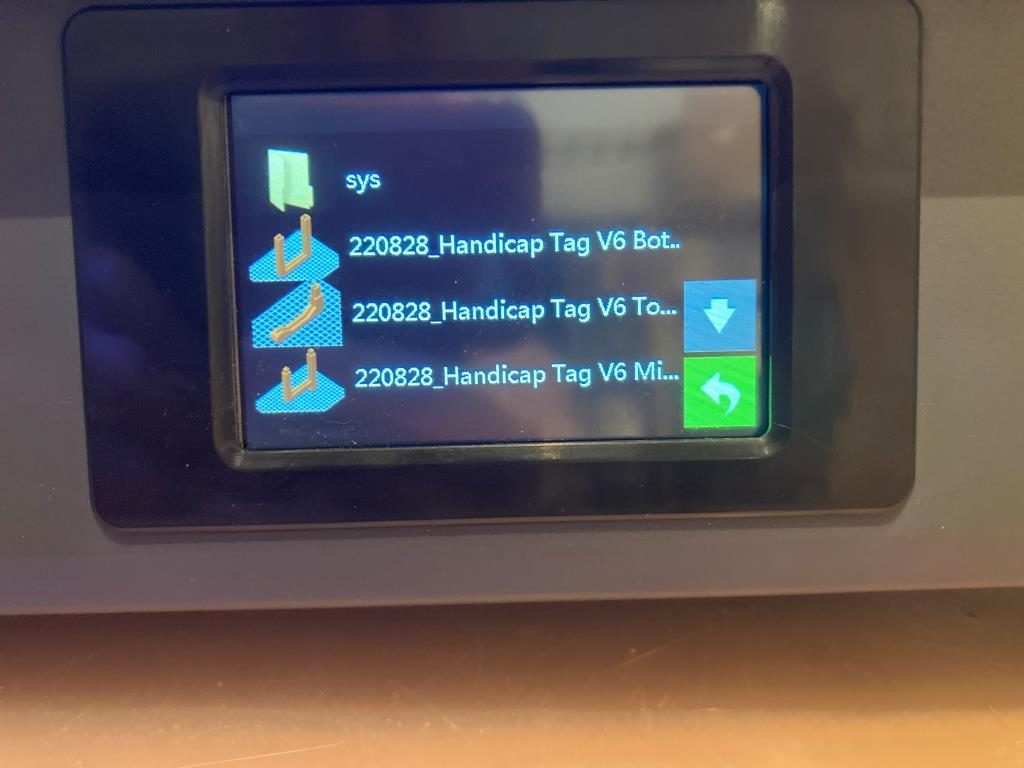

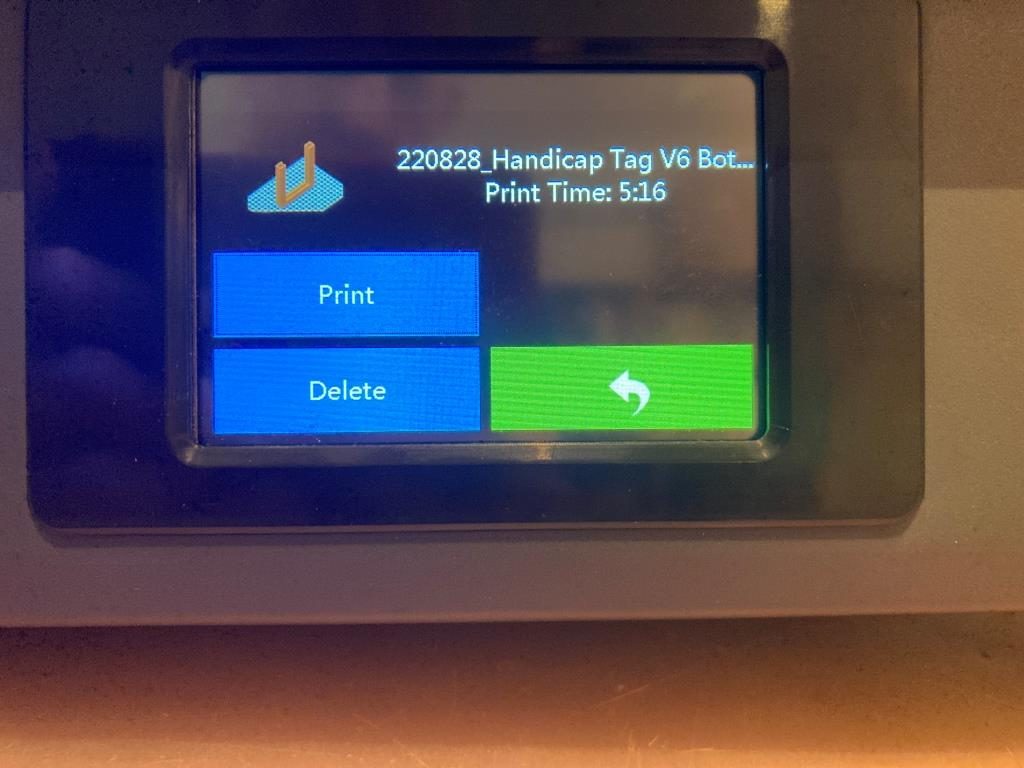

The ‘Print’ menu shows a partial list of the available print files, and allows the user to scroll up and down the list as necessary. However, as shown in the following photos, print file names are truncated after N characters, so similarly named files (I use version numbers a lot) all look the same. Moreover, when a file is actually selected by tapping on the name, it still isn’t shown full length. My Prusa MK3S+ printer has the same problem, but solves it by repeatedly scrolling through the name.

Conclusion:

The FlashForge Creator PRO 2 IDEX printer is a great printer, and I have gotten many many good prints from it over the last year. Even with the frustrations associated with the less-than-perfect GUI, I don’t regret trading down from my previous MakerGear M3-ID Independent Dual Extruder (IDEX) machine. However, I believe the GUI was not given the care and resources it needed to be a first-class example of a 3D printer user interface, and should be updated. If it remains in this ‘sort-of-OK-sort-of-clunky’ state, I think it will sour a lot of 3D makers off the FlashForge brand.

Flashforge could actually kill two birds with one stone if they were to open-source the GUI code; then users like myself who are frustrated with the current performance could collaborate in making it better.

Stay tuned,

Frank